Introduction: cybercrime trends and evolution

Over the past few years, the Internet has become a dangerous place. Initially designed to accommodate a relatively small number of users, it grew far behind anything its creators could have anticipated. There are currently over 1.5 billion Internet users and this number continues to increase as technology becomes even more affordable.

Criminals have also noticed this trend and they soon realized that committing crimes over the Internet – now generally referred to as ‘cybercrime’ – has certain advantages.

Firstly, cybercrime is low risk; since it transcends geo-political borders, it is difficult for law enforcement agencies to catch the perpetrators. Moreover, the costs of conducting cross-border investigations and prosecutions can be high, meaning this is only worth doing in major cases. Secondly, cybercrime is easy: there is extensive documentation on hacking and virus writing freely available on the Internet, meaning that no sophisticated knowledge or skill is required. These are the two main factors which have lead to cybercrime becoming a multi-billion dollar industry, truly a self sustaining eco-system of its own.

Both security companies and software developers wage a constant battle with cybercriminals; their aim is to develop protection for Internet users and software that is secure. Of course, cybercriminals constantly change their tactics in order to combat these countermeasures, and this has resulted in two marked current trends.

First of all, there is the deployment of malware using 0-day vulnerabilities. 0-day vulnerabilities are vulnerabilities for which a patch is not yet available, and they can be used to infect even fully up-to-date computer systems which are not running a dedicated security solution. 0-day vulnerabilities are a valuable commodity due to their potentially serious impact, and they usually sell for tens of thousands of dollars on the black market.

Secondly, we are seeing a spike in the type of malware designed to steal confidential information that can later be sold on the black market. Such information includes credit card numbers, bank account details, passwords for websites such as eBay or PayPal, and even passwords for online games such as World of Warcraft.

One of the obvious reasons why cybercrime has become so widespread is because it is profitable; this profitability will always drive the development of new cybercrime technologies.

In addition to recent developments in the area of cybercrime, another marked trend is the distribution of malware via the World Wide Web. Following outbreaks caused by email worms such as Melissa in the early years of the decade, a lot of security companies focused their efforts on ensuring their solutions stopped malicious attachments. Sometimes this went as far as removing all executable attachments from messages.

In recent years, however, the Web has become the main distribution point for malware. Malicious programs are hosted on websites; users are then either tricked into running these programs manually, or exploits are used to execute the malware automatically on victim machines.

At Kaspersky Lab, we’ve been monitoring this trend with growing concern.

Statistics

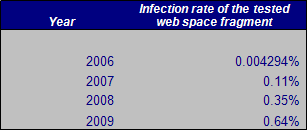

Over the past three years, we’ve monitored between 100,000 and 300,000 otherwise “clean” websites in order to identify when they become distribution points for malware. The number of websites monitored has grown over time as more domains have been registered.

The table above shows the maximum recorded infection rate for monitored websites throughout the year. There has been a sharp rise from the roughly 1 infected website in every 20,000 or so websites in 2006 to the current maximum of 1 infected website in every 150 at the beginning of 2009. The percentage of infected websites fluctuates at around this number. This may mean saturation point has been reached, where all the websites that can be infected have been. However, the number rises and falls as new vulnerabilities and tools are discovered that allow attackers to take over new hosts.

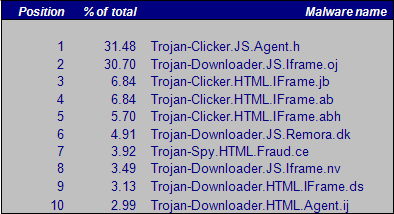

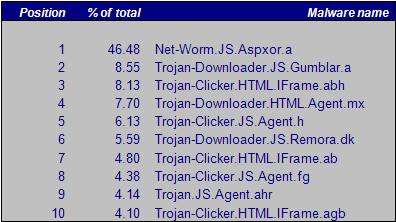

The next two tables show the malware most commonly detected on websites in 2008 and 2009.

Top 10 infections – 2008

Top 10 infections – 2009

In 2008, Trojan-Clicker.JS.Agent.h was found in the vast majority of cases, followed closely by Trojan-Downloader.JS.Iframe.oj.

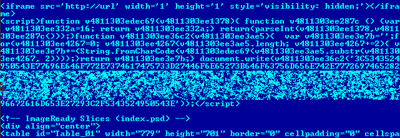

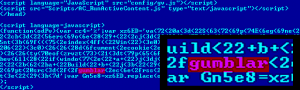

Example of a page source infected with Trojan-Clicker.JS.Agent.h

![]()

Decoded Trojan-Clicker.JS.Agent.h

Trojan-Clicker.JS.Agent.h is a typical example of what most website malware injections looked like in 2008 and still look like in 2009. A small fragment of JavaScript code is added, which is usually obfuscated to prevent analysis. In the code shown above, the obfuscation simply consists of the ASCII characters which form the malicious code being converted into their hex codes. Once decoded, the code is usually an iframe which leads to a website hosting exploits. The IP address will vary and there are many deployment points. The entry page in the malicious website usually hosts exploits for IE, Firefox and Opera. Trojan-Downloader.JS.Iframe.oj, which was the second most common piece of malware, works in a very similar way.

There were two very interesting cases in 2009, the first of which was Net-Worm.JS.Aspxor.a. Although .this malware was detected back in July 2008, in 2009 it became far more widespread. It works by using a kit which finds SQL injection vulnerabilities in websites which are then used to insert malicious iframes.

Another very interesting case is “Gumblar”, named after the Chinese domain that was used as an exploitation point. The “gumblar” string, visible in the obfuscated JavaScript which is added to websites, is a clear sign that a website has been compromised.

Typical Gumblar injection in a website

Once deobfuscated, the malicious Gumblar code looks like this:

The “gumblar.cn” domain has been taken down, but unfortunately, the bad guys have since switched to new domains which are being used to conduct similar attacks.

Infection and distribution methods

There are currently three main ways in which websites can become infected with malware.

The first popular method is to use vulnerabilities in the website itself, for instance a SQL injection, which allow the addition of malicious code. Attack tools such as ASPXor demonstrate this method: they can be used for mass scanning and injection of malware for thousands of IP addresses at a time. Such attacks can often be seen in web server access logs.

The second method involves infecting a web developer’s machine with malware which monitors the creation and upload of HTML files and then injects malicious code into these files.

Finally, the last common method is to infect a web developer or somebody with access to the hosting account with a password stealing Trojan (eg. Trojan-Ransom.Win32.Agent.ey). Usually, the password stealing Trojan will contact a server via HTTP to transmit ftp account passwords which have been harvested from popular ftp tools such as FileZilla or CuteFtp. The server side component then logs the account access information in an SQL database. Later, a server side tool will go through the SQL database, log into all the ftp accounts, fetch the index page, append the Trojanized code and then re-upload the page.

Because in this last method the hosting account access details are compromised, it’s quite common for websites to get infected, for the developers to notice the infection or be alerted to it by site visitors, and for the site to be cleaned, only for it to be infected again the very next day.

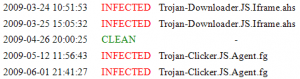

Example of a website (*.*.148.240) which gets infected, then cleaned, then infected again

Another common situation is when different cybercriminal groups get hold of the same vulnerability or hosting account details at the same time. A battle then begins, with each group attempting to infect the website with their piece of malware. An example of this is given below:

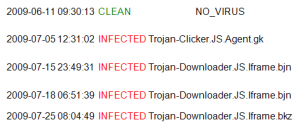

Sample scan report of a website (*.*.176.6) with multiple infections

On 11.06.2009, the website being monitored was clean; it was infected with Trojan-Clicker.JS.Agent.gk on 05.07.2009. Later, on 15.07.2009 a new piece of malware, Trojan-Downloader.JS.Iframe.bin, was injected into the website. Ten days later, the malware was replaced again. This is relatively common and many websites actually contain a number of pieces of malware, appended one after the other, which have been placed there by different cybercriminal groups.

Below is a checklist of actions which need to be taken whenever a website infection is detected:

- Identify everyone who has the website hosting access information; scan their systems with an up-to-date Internet security suite; remove any malware which is detected

- Change the hosting password to a new, strong one. Strong passwords contain letters, numbers and non-alphanumeric characters to make guessing the password difficult

- Replace all compromised files with clean copies

- Identify any backups that might contain infected files and clean them.

In our experience, it’s quite common for infected websites to get re-infected after they’ve been cleaned. Most of the time, though, this happens only once: although the action taken in response to the initial infection may be relatively superficial, the webmaster is likely to conduct a more thorough investigation the second time infection is discovered.

Evolution: the move to legitimate websites

A couple of years ago, when the web started to be widely used as a deployment point for malware, cybercriminals mostly relied on so-called bullet-proof hosting or hosting purchased with stolen credit cards. Noticing this trend, the security industry made a concerted effort to develop contacts which made possible the take down of some major malware hosting operations such as the US hosting provider McColo and Estonian company EstDomains. While there are still cases when malware is hosted on obviously malicious sites in China, for example, where takedown is still difficult, perhaps one of the most important developments is that malware is now being hosted on otherwise clean and reputable domains.

Action and reaction

As mentioned above, adaptability is one of the most important aspects of the constant battle between cybercriminals and security companies. Both sides constantly change their tactics and deploy new technologies in an effort to counteract their opponents’ latest moves.

Modern browsers, such as Firefox 3.5, Chrome 2.0 and Internet Explorer 8.0 now come with built-in malware protection in the form of URL filtering. This is designed to keep users safe from malicious websites that either contain exploits for known or unknown vulnerabilities, or that use social engineering for the purpose of identity theft.

For instance, both Firefox and Chrome use the Google Safe Browsing API a free URL filtering service from Google. At the time of writing, the Google Safe Browsing API malware list contained around 300,000 entries for websites known to be malicious and more than 20,000 entries for phishing websites.

The Google Safe Browsing API takes a non-invasive approach to URL filtering. Instead of sending each URL to a third party for verification, as the IE8 Phishing Filter does, the URLs are checked against a list of MD5 checksums. For this to be effective, the list needs to be updated periodically, with the recommended period being every 30 minutes. Of course, this method does have a drawback – the number of malicious websites is larger than the number of entries in the MD5 list. However, in order to keep the size of the list manageable (it’s currently about 12MB) the list probably only includes the most commonly encountered malicious websites. This means that even using applications which implement such technologies, computers can still get infected with malware placed on non-listed sites.

However, the implementation of safe browsing technologies shows that browser developers have taken note of the trend for spreading malware via websites, and are taking steps to counteract it. In fact, built-in security protection in web browsers has effectively become a standard.

Conclusions

Over the past three years, the number of otherwise benign websites that get infected with malware has grown at an alarming rate. There are now over a hundred times more infected websites on the Internet than three years ago. High profile, high traffic websites are a valuable commodity for cybercriminals, as the pool of potential victims that can be infected via such websites will be larger than usual.

For webmasters, here are a few simple tips on how to stay safe:

- Use strong passwords for hosting accounts

- Use SCP/SSH/SFTP to upload files instead of FTP; this prevents the passwords from being sent in cleartext over the Internet

- Install and run a security solution

- Maintain several different backups that can be used to quickly restore the website if it is compromised.

For Internet users, there are several factors which increase the risk of falling victim to websites booby-trapped with malicious code. These include the use of pirated software, failure to install security patches, failure to run a security solution, and a general lack of awareness/ knowledge of Internet threats.

Pirated software plays a major role in computers becoming infected. Pirate copies of Microsoft Windows generally will not update themselves automatically with the latest security patches, meaning they are wide open for newly identified vulnerabilities to be exploited.

Additionally, older versions of Internet Explorer (still the most widely used browser) are vulnerable to countless exploits. Typically, any malicious website will be able to exploit an unpatched Internet Explorer 6.0. Because of this, it’s extremely important to avoid using pirate software, especially pirate copies of Windows.

Another risk factor is failure to install a security solution. Even if the system itself is up to date, it could be infected via 0-day vulnerabilities in third party software. Security solutions are usually updated far more quickly than software patches are produced, and provide a much-needed layer of protection during the vulnerability window.

While patching is important in helping keep computers secure, the ‘human factor’ also plays a role. For instance, a user might try to watch a ‘funny clip’ s/he’s downloaded from the Internet – which turns out to be malware. Some websites will actually attempt to use this trick if exploits fail to infect the system. This example shows why users need to be aware of Internet threats, and particularly those associated with Web 2.0 social networks, which have recently been increasingly targeted by cybercriminals.

Below are a few points on how to protect against attacks:

- Don’t download pirate software

- Keep all software up-to-date, including the operating system, web browsers, PDF readers, music players and so on.

- Install and use a security solution such as Kaspersky Internet Security 2010

- Encourage employees to spend a few hours every month visiting security related websites such as https://securelist.com, where they can learn about the dangers of the Internet and how to stay protected.

Finally, remember that prevention is always better than cure, and take appropriate steps to secure your systems.

Further reading:

Browsing malicious websites