What a week for being in Boston! I was heading to Source Conference the very same day the blast happened. It-s hard to describe all the intense emotions when I arrived. As president Obama said today to the city of Boston: ?You will run again¦. All my best to you guys, stay strong.

In my presentation in Source I talked about fraud in Twitter. These days we find a lot of spam bots in this social network, both blindly sending unsolicited direct messages to other users or doing some previous semantic analysis, depending on your tweets, for a more targeted message.

These bots are usually easily identified and promptly shut down by Twitter, but they are recreated again just as easily. For a given campaign spamming porn with more than 5000 active bots, they were creating 250 new ones a day. For some campaigns the half-life of the fake profiles is as low as 45 minutes.

These bots are obviously against the interest of spammed users, but also against the interest of the social network itself.

Interestingly, many companies offer this service as ?digital social advertising¦. You can see how the same profiles are being rotated on a regular basis, changing the profile description and picture both for avoiding detection and adapting it for the new campaign:

Several bots used this profile picture:

And the same bots one week later:

Many of the bots use a common dictionary for the tweets they send, apart from the spam messages sent. This way they try to disguise themselves as legitimate profiles. However this makes it easier to detect them. And that¦s why they are starting to play new tricks to avoid semantic analysis-based detection by using random messages with words usually ignored in any semantic analysis. Here you can see some real examples:

- if its do you me your my do it my be find is but on are its rt that was

- I a me at get out your they on rt if I get rt can a

- u you rt find in I that that your my my find one you so is is my you this but get all a one its it

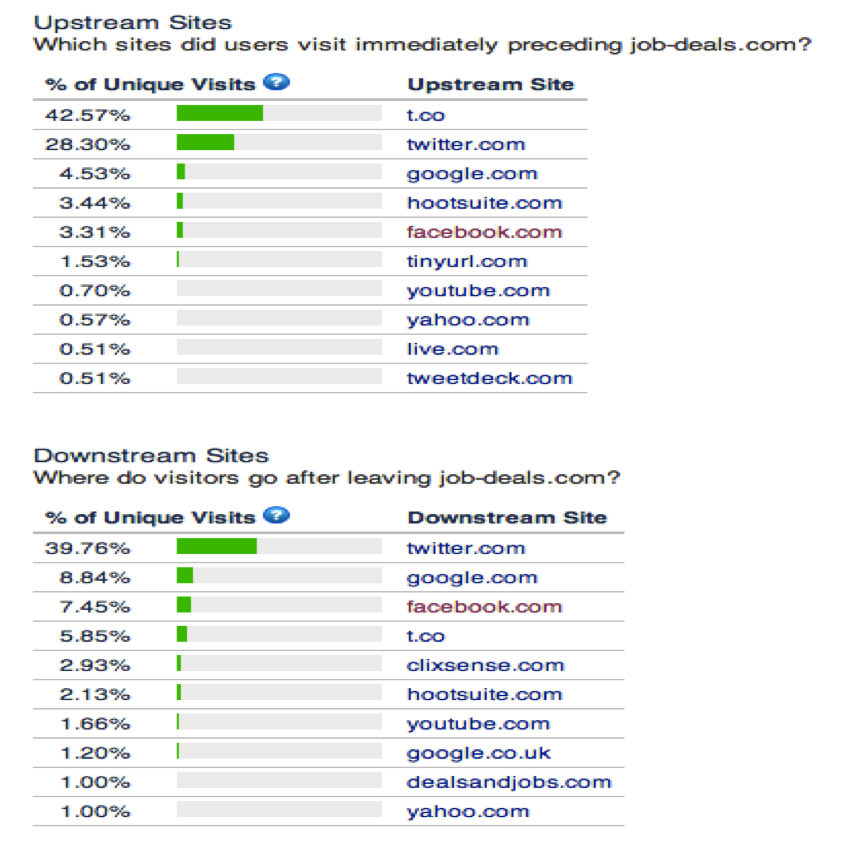

Some of these campaigns are not only limited to Twitter, but we start seeing how they target several social networks, including Facebook. For instance, the job-deals.com campaign (active since beginning of April) mainly hits Twitter, but you can see how the Upstrean and Downstream sites according to Alexa also reflect Facebook users being hit:

These bots are not just a nuisance for users, they may represent a real threat when used to send more than just spam. What¦s even more worrisome, many times they are used along with hacked accounts, effectively increasing the chances of the tweet to be clicked by the receivers as they believe it comes from friend.

We can see how a recent campaign used this technique to hijack accounts with the incredibly original message ?LOL, funny pic of you¦ followed by the link to the malicious landing site:

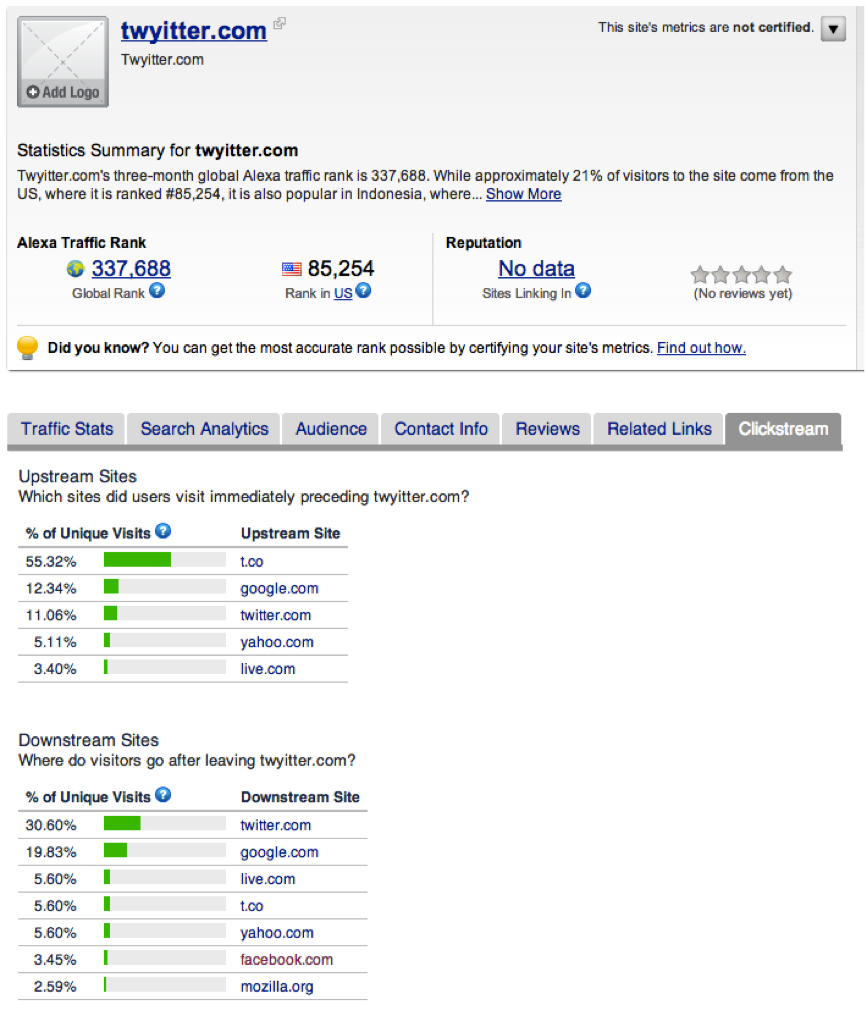

Several domains were used for this campaign, and again we see how some of them were likely spread in other social networks too:

There is much more fraud around in social networks, such as Twitter being used for spreading malware, for communicating with malware or for hacktivists- interests as recently happened with Venezuela¦s election:

There is much more to cover, but probably that¦s enough for a blog post. However if you are interested in the topic, and in how you can use machine learning for detecting these malicious profiles, you can check my presentation here:

Is digital marketing the new spam?