Is it possible to define human intelligence so precisely as to be able to then simulate it with the aid of machines? That is still very much a bone of contention among the scientific community. Developers who are trying to create artificial intelligence use widely varying approaches, but many believe that artificial neural networks are the way forward. As things stand today, no device containing artificial intelligence has successfully passed the Turing test. The famous British computer scientist Alan Turing stated that in order for a machine to be classed as truly intelligent in its own right, a user should be completely unable to distinguish if they are interacting with a machine or another human being. One potential application of autonomous artificial intelligence is in the field of computer virology and the development of systems to remotely treat infected computers.

The main task facing artificial intelligence [AI] researchers at present is to create an autonomous, AI device fully capable of learning, making informed decisions and modifying its own behavioral patterns in response to external stimuli. It is possible to build highly specialized bespoke systems; it is possible to build more universal and complex AI, however, such systems are always based upon experience and knowledge provided by humans in the form of behavioral examples, rules or algorithms.

Why is it so difficult to create autonomous artificial intelligence? It is difficult because a machine does not possess such human qualities as consciousness, intuition, an ability to differentiate between important and minor things, and most importantly, it lacks any thirst for new knowledge. All of these qualities endow mankind with the ability to arrive at solutions to problems, even when the problems are not linear. In order to perform work, AI currently requires algorithms that have been predetermined by humans. Nevertheless, attempts to reach the holy grail of true AI are constantly being made and some of them are showing signs of success.

The costs of non-automated processing

The process of detecting malware and restoring normal operating parameters on a computer involve three main steps. That rule applies regardless of who or what undertakes each step, be it a man or a machine. The first step is the collection of objective data about the computer under investigation and the programs it is running. This is best achieved by the use of high-speed, automated equipment capable of producing machine-readable reports and operating without human intervention.

The second step involves subjecting the collected data to detailed scrutiny. For example, if a report shows that a suspicious object has been detected, that object must be quarantined and thoroughly analyzed to determine what threat it poses and a decision taken regarding what further action is required.

The third step is the actual procedure for treating the problem, for which a special scripting language can be used. This contains the commands needed to remove any malware files and the restoration of the normal operating parameters of the computer.

Broadly speaking, just a few years ago steps two and three were performed using almost no automation by analysts working for IT security companies and experts on specialized forums. However, with an increase in the number of users becoming malware victims and subsequently needing help, this led to a number of problems, namely:

- When protocols and quarantine files are processed manually, a virus analyst is faced with huge volumes of continually changing information that needs to be absorbed and fully understood, a process which is never fast.

- A human being has natural psychological and physiological limits. Any specialist can get tired or make a mistake; the more complex the task, the higher the chances are of making a mistake. For example, an overburdened malware expert may not notice a malicious program, or conversely, may delete a legitimate application.

- The analysis of quarantined files is a very time-consuming operation because of the fact that the expert needs to consider the unique features of each sample – i.e., where and how it appeared and what is suspicious about it.

The aforementioned problems can only be resolved by fully automating the analysis and treatment of malware, however, numerous attempts do this by the use of different algorithms have so far yielded no positive results. The main reason for this failure lies in the fact that malware is constantly evolving and that every day, dozens of new malware programs with ever more sophisticated methods of embedding and disguising themselves appear on the Internet. As a result, detection algorithms need to be ultra-complex and worse still, become outdated very rapidly and need to be kept constantly up to date and debugged. Another problem, of course, is that the effectiveness of any algorithm is naturally limited by the ability of its creators.

The use of expert systems in virus ‘catching’ appears to be a little more effective. Developers of expert antivirus systems face similar problems to those described above – the effectiveness of a system depends upon the quality of the rules and knowledge bases that it uses. Additionally, these knowledge bases have to be constantly updated and once again that means investing in human resources.

General principles of operation of the Cyber Helper system

Despite the difficulties, over the course of time experiments in this field have led to some successes, including the fact that the Cyber Helper system itself was created, representing a successful attempt at getting nearer to employing truly autonomous AI in the battle against malware. The majority of Cyber Helper’s autonomous subsystems are able to synchronize, exchange data and work together as if they were a single unit. Naturally they contain some ‘hard’ algorithms and rules like conventional programs do, but for the most part they operate using fuzzy logic and independently define their own behavior as they go about solving different tasks.

At the heart of the Cyber Helper system is a utility called AVZ that was created by the author of this article in 2004. AVZ was specifically designed to automatically collect data from potentially infected computers and malware samples and store it in machine-readable form for use by other subsystems. The utility creates reports in HTML format for analysis by a human and XML for machine analysis. From 2008 onwards, the core AVZ program has been integrated into Kaspersky Lab’s antivirus solutions.

The Cyber Helper system’s general operation algorithm steps 1 to 6

The system’s operating algorithm consists of six steps. During the first step, the core AVZ program performs an antivirus scan of the infected computer and transfers the results it receives in XML format to the other Cyber Helper subsystems for analysis.

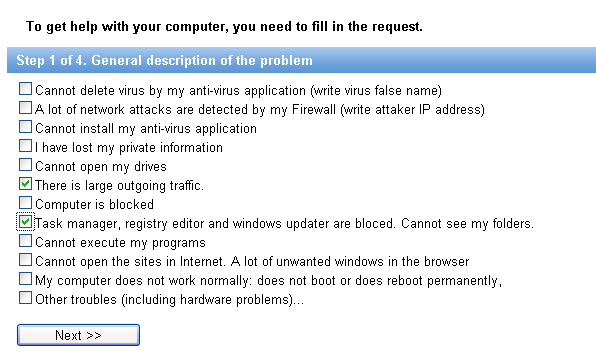

When a request for treatment is made it is important to provide

answers to all the questions concerning the system

The system analyzes the received protocol using the enormous volumes of data already available relating to similar malware programs, any previously performed remedial actions undertaken in similar cases, as well as other factors. In this respect, Cyber Helper resembles an active human brain, which in order to process information must accumulate knowledge about its surrounding environment; especially when it is first activated. In order for children to fully develop it is vital that they are continually aware of what is happening in their world and that they can readily communicate with other people. Here a machine has the advantage over a human as it is able to store, extract and process much larger volumes of information than people can in a given time span.

An example of an instruction and script for treatment/quarantine

written by the Cyber Helper system without any human participation

Another similarity between Cyber Helper and human beings is that Cyber Helper is able to independently and with almost no prompting, undertake the process of protocol analysis and constantly teach itself in an ever-changing environment. When it comes to self-learning, there are three main areas which challenge Cyber Helper: mistakes made by human experts that the machine is not intuitive enough to resolve; incompleteness and inconsistency of program information and the multiple refining of data and delays in entering data into the system. Let’s look at them in more detail.

The difficulties of implementation

Experts processing protocols and quarantine files can make mistakes or perform actions that cannot be logically explained from a machine’s perspective. Here’s a typical example: when a specialist sees an unknown file in a protocol with the characteristics of a malware program called %System32%ntos.exe, the specialist deletes such a file without quarantining it and analyzing it further on the basis of their experience and intuition. Thus the details of the actions performed by the specialists and how they arrive at their conclusions cannot always be transformed directly into something that a machine can be taught. Treatment information may often be incomplete or contradictory. For example, before seeking expert assistance, a user may have tried to rectify a problem on his or her computer and deleted only a part of the malware program – restoring infected program files and not cleaning the registry in the process. Finally the third typical problem: during the protocol analysis procedure, only metadata from a suspicious object is available, whilst after analysis of the quarantined file, only initial information about the suspicious object is available. Then the categorization of an object takes place – the outcome being that it either represents a malware program or a ‘clean’ program. Such information is usually only available after repeated refinement and some considerable time, from minutes up to months even. The defining process may take place both externally in an analytical services laboratory, as well as inside Cyber Helper’s own subsystems.

Let’s look at a typical example: an analyzer checks a file but finds nothing dangerous in the file’s behavior and passes this information on to Cyber Helper. After a while the analyzer is upgraded and repeats its analysis of the suspect file that it examined earlier, only this time it returns the opposite verdict to that which it issued previously. The same problem can occur in relation to the conclusions drawn by specialist virus analysts for those programs with an arguable classification, for example, programs for remote management systems, or utilities that cover a user’s tracks – their classification may change from one version to the next. The peculiarity mentioned above – the volatility and ambiguity of the analyzed programs’ parameters, has resulted in any decisions taken by Cyber Helper being based on more than fifty different independent analyses. The priorities in every type of research and the significance of its results are constantly changing, along with the process of self learning for the intelligent system.

On the basis of information available at the present time, the Cyber Helper analyzer provides a number of hypotheses with regard to which of the objects present in a protocol may constitute a threat and which can be added to the database of ‘clean’ files. On the basis of these hypotheses, AVZ automatically writes scripts for the quarantining of suspicious objects. The script is then transferred to the user’s machine for execution. (Step 2 of the Cyber Helper system’s general operation algorithm).

At the stage at which the script is written it may be that the intelligent system has detected data that is clearly nefarious. In this case, the script can include the delete commands for known malware programs or call for special procedures to restore known system damage. Such situations happen quite often and are due to the fact that Cyber Helper simultaneously processes hundreds of requests; this is typical in situations where several users have suffered at the hands of the same malware program and their machines are requesting assistance. Having received and analyzed the required samples from one of the users’ machines, Cyber Helper is able to provide other users with the treatment scripts, omitting the quarantine stage completely and thereby saving users’ time and data traffic. Objects received from the user are analyzed under the control of Cyber Helper and the results enlarge the Cyber Helper knowledge base regardless of the outcome. That way the intelligent machine can check any hypotheses arrived at in step 1 of the general operation algorithm, consequently providing confirmation, or otherwise, of the outcome.

Cyber Helper’s technical subsystems

Cyber Helper’s main subsystems are autonomous entities that analyze program files for content and behavior. Their presence allows Cyber Helper to analyze malware programs and teach itself from the results of its endeavors. If the analysis clearly confirms that an object is malevolent, that object is passed to the antivirus laboratory with a high priority recommendation to include it into the antivirus databases; a treatment script is then written for the user (step 5 of the general algorithm). It is important to note that despite analyzing an object, Cyber Helper cannot always make a categorical decision regarding the nature of the object. When such a situation occurs, all of the initial data and results collected are passed on to an expert for analysis (Step 6). The expert will then provide the required treatment solution. Cyber Helper is not involved with the process, but continues to study the received quarantines and protocols, generating reports for the expert and thereby freeing them from the lion’s share of routine work.

Once a request has been formulated the system once again displays

it to the operator so that the operator can check that all of the input data is correct

At the same time, the AI systems’ ‘non-intervention policy’ regarding the malware analyst’s work is not always applied; dozens of cases are known in which the intelligent machine has discovered mistakes in the actions of humans by referring to the experience it has accumulated and the results of its own analysis of an object. In such cases, the machine may start by interrupting the analytical and decision-making process and send a warning to the expert before going on to block the scripts that are to be sent to the user, which from the machine’s perspective could harm the user’s system. The machine carries out much the same control over its own actions. While the treatment scripts are being developed, another subsystem simultaneously evaluates them, preventing any mistakes that may occur. The simplest example of such a mistake might be when a malware program substitutes an important system component. On the one hand it is necessary to destroy the malware program, while on the other; to do so may result in irrecoverable system damage.

The 911 services available on the VirusInfo website

can be used by anyone who wants to

These days, Cyber Helper is successfully integrated into the http://virusinfo.info antivirus portal and forms the basis of the experimental 911 system http://virusinfo.info/911test/. In the ‘911 system’, Cyber Helper communicates directly with the user: requesting protocols, analyzing them, writing scripts for the initial scan and performing quarantine file analysis. In accordance with the results of its analysis, the machine is permitted to carry out treatment of the infected computer. Furthermore, Cyber Helper assists the work of the experts by finding and suppressing any dangerous mistakes, carrying out initial analyses of all the files placed in quarantine by the experts and processing the quarantined data before adding it to the database of ‘clean’ files. The technology behind Cyber Helper and its principle of operation are protected by Kaspersky Lab patents.

Conclusion

Modern malware programs act and propagate extremely fast. In order to respond immediately, the intelligent processing of large volumes of non-standard data is required. Artificial intelligence is ideally suited to this task; it can process data far in excess of the speed of human thought. Cyber Helper is one of only a handful of successful attempts to get closer to the creation of autonomous artificial intelligence. Like an intelligent creature, Cyber Helper is able to self learn and define its own actions in an independent manner. Virus analysts and intelligent machines complement one another extremely well by working together more effectively and providing users with more reliable protection.

This article was published in the Q4, 2010 issue of Secureview

Cyber Expert. Artificial Intelligence in the realms of IT security